I’m deeply honored to be appointed by Governor Youngkin to serve on Virginia’s inaugural AI Task Force. This distinguished group of leaders from academia, nonprofits, and industry will advise policymakers on harnessing AI to transform government services, streamline regulations, and position Virginia as a leader in responsible AI innovation. As we unlock AI’s potential to improve efficiency and reduce burdens on state agencies, we must also ensure thoughtful safeguards to protect privacy, fairness, and public trust. I

Why the Veto of California Senate Bill 1047 Could Lead to Safer AI Policies

Gov. Gavin Newsom’s recent veto of California’s Senate Bill (SB) 1047, known as the Safe and Secure Innovation for Frontier Artificial Intelligence Models Act, reignited the debate about how best to regulate the rapidly evolving field of artificial intelligence. Newsom’s veto illustrates a cautious approach to regulating a new technology and could pave the way for more pragmatic AI safety policies.

The Deception Dilemma: When AI Misleads

An emerging body of research suggests that large language models (LLMs) can “deceive” humans by offering fabricated explanations for their behavior or concealing the truth of their actions from human users. The implications are worrisome, particularly because researchers do not fully understand why or when LLMs engage in this behavior.

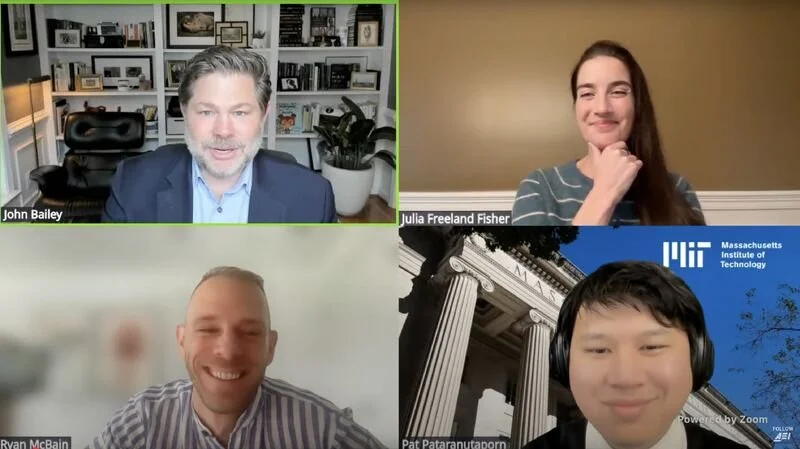

The Promise and Limitations of AI in Education: A Nuanced Look at Emerging Research

New research reveals the transformative potential and complex realities of AI in education. From AI-powered grading systems that match human accuracy while saving countless hours, to AI tutors that both enhance and hinder learning, to AI's ability to reason like humans and predict social science experiments, these studies paint a nuanced picture of AI's role in the future of education. As the field rapidly evolves, enthusiasts and skeptics alike must grapple with the profound implications of AI for teaching and learning and ensure their views are shaped by research.

Preserving Trust and Freedom in the Age of AI

I was excited to contribute to a group of over 20 prominent AI researchers, legal experts, and tech industry leaders from institutions including OpenAI, the Partnership on AI, Microsoft, the University of Oxford, a16z crypto, and MIT on a paper proposing "personhood credentials" (PHCs) as a potential solution to the growing challenge of AI-powered online deception. PHCs would provide a privacy-preserving way for individuals to verify their humanity online without disclosing personal information. While implementation details remain to be determined, the core concept warrants serious consideration from policymakers and tech leaders as AI capabilities rapidly advance, threatening to erode the trust and accountability essential for societies to function.

Open AI Models: A Step Toward Innovation or a Threat to Security?

The increasing capabilities of AI have sparked an important debate: Should the "weights" that define a model's intelligence be openly accessible or tightly controlled? This article dives deep into the arguments on both sides. Proponents say open-weight models accelerate innovation, enable greater scrutiny for safety, and democratize AI capabilities. Critics warn of risks like misuse for disinformation or military purposes by adversaries. The piece examines recent developments like frontier open-weight models from Meta and Mistral, Mark Zuckerberg's case for openness, concerns about China's AI ambitions, and the US government's cautious approach.

Charting a Bipartisan Course: The Senate’s Roadmap for AI Policy

The recent AI policy roadmap from the bipartisan AI working group in Congress strikes a thoughtful balance between promoting AI innovation and addressing potential risks. It lays out a nuanced approach including increased AI safety efforts, crucial investments in domestic STEM talent, protections for children in the age of AI, and "grand challenge" programs to spur breakthroughs - all while avoiding hasty overregulation that could stifle progress

An AI Healthcare Coalition Suggests a Better Way of Approaching Responsible AI

In the rapidly evolving landscape of artificial intelligence (AI), the dialogue often veers between the extremes of stringent regulation, like the European Union’s AI Act, and laissez-faire approaches that risk unbridled technological advances without sufficient safeguards. Amidst this polarized debate, the Coalition for Health AI (CHAI) has emerged as a promising alternative approach that addresses the ethical, social, and economic complexities introduced by AI, while also supporting continued innovation.

Understanding the Capabilities of GenAI

It's difficult to understand some technologies because they're better experienced than described. I've found GenAI to be one example where it's difficult to grasp the full range of capabilities unless you see some of it in action. Over the last year, I've given a number of presentations that tried to contextualize GenAI for the audience by demonstrating relevant use cases. I compiled them in this long master deck, which I periodically update and am sharing in the hope that it may spark some ideas for you.

Faster, Please! Podcast Discussion on AI

I had a great time joining James Pethokoukis on his podcast, Faster, Please! We touched on a number of areas including how AI can help improve teaching and learning.

Why AI Struggles with Basic Math (and How That’s Changing)

Large Language Models (LLMs) have ushered in a new era of artificial intelligence (AI) demonstrating remarkable capabilities in language generation, translation, and reasoning. Yet, LLMs often stumble over basic math problems, posing a problem for their use in settings—including education—where math is essential. However, these limitations are being addressed through improvements in the models themselves along with better prompting strategies.